My research has spanned a

number of different areas over the years, but

generally has been driven by fundamental issues

arising in pattern recognition and its application to

real-world problems.

Electronic Voting Systems

For a summary of some of my recent work on electronic

voting, please visit the PERFECT project website

here.

Noisy Text Analytics

Errors are unavoidable in advanced computer vision

applications such as optical character recognition

(OCR), and the noise induced by these errors presents

a serious challenge to downstream processes that

attempt to make use of such data. Some of my work

involves developing techniques to measure the impact

of recognition errors on the NLP stages of a standard

text analysis pipeline: sentence boundary detection,

tokenization, and part-of-speech tagging. I have

developed a methodology that formulates error

classification as an optimization problem solvable

using a hierarchical dynamic programming approach, and

used this technique to analylze OCR errors and their

cascading effects as they travel through the pipeline.

Listed below are papers that describe this work:

“Measuring the Impact of Character Recognition Errors

on Downstream Text Analysis,” D. Lopresti,

Proceedings of Document

Recognition and Retrieval XV (IS&T/SPIE

International Symposium on Electronic Imaging),

January 2008.

“Performance Evaluation for Text Processing of Noisy

Inputs,” D. Lopresti,

Proceedings of the 20th Annual ACM

Symposium on Applied Computing (Document Engineering

Track), March 2005, Santa Fe, NM, pp.

759-763. (

Abstract)

(

PDF

83

kbytes)

“Summarizing Noisy Documents” (with H. Jing and C.

Shih),

Proceedings of the Symposium on Document

Image Understanding Technology, April 2003,

Greenbelt, MD, pp. 111-119. (

PDF

97

kbytes)

I am also co-chair of the Workshops on Analytics for

Noisy Unstructured Text Data. The first workshop,

AND 2007,

was held in Hyderabad India in January 2007 in

conjunction with the Twentieth International Joint

Conference on Artificial Intelligence (IJCAI). The

second workshop,

AND

2008, will be held in Singapore in July 2008 in

conjunction with the Thirty-first Annual International

ACM SIG-IR Conference.

A noisy text dataset I have made available to the

international research community can be found

here.

Bioinformatics

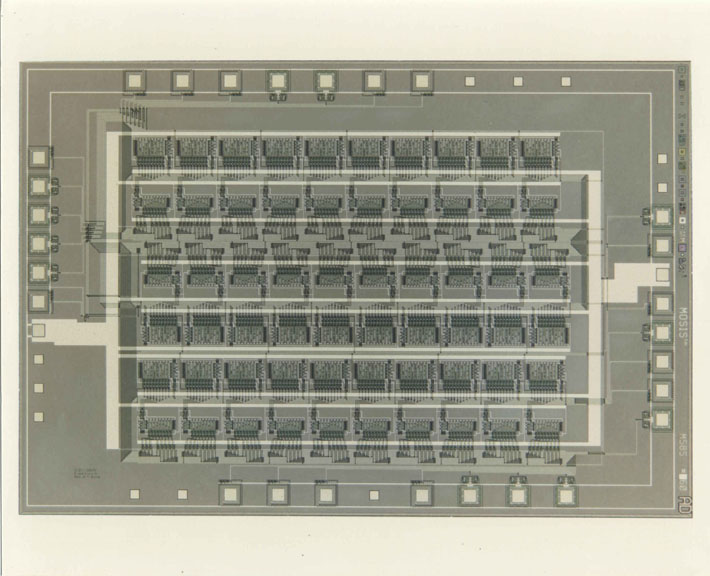

My thesis work at Princeton under Dick Lipton (now at

Georgia Tech) was some of the earliest in the area of

parallel algorithms for the rapid comparison of

genetic sequences. I built the first systolic

array for solving a combinatorial problem, P-NAC (the

"Princeton Nucleic Acid Comparator"). At Brown I

continued this research with my Ph.D. student Richard

Hughey (now at UC Santa Cruz), and also co-taught a

seminar on "Computational Aspects of Molecular and

Population Biology" with Lisa Brooks of the Biology

Department. Consulting at the Supercomputing

Research Center, I implemented sequence comparison on

SPLASH, a massively parallel reconfigurable logic

array (FPGA) architecture, work that was awarded

Honorable Mention in 1989 Gordon Bell Prize

competition.

Microphotograph of

P-NAC

Microphotograph of

P-NAC

Three of my more recent theoretical results also have

potential applications in bioinformatics.

Working with Andrew Tomkins (now at IBM Almaden), I

introduced the notion of block edit distance, which

imposes an added layer of structure on top of

traditional string matching; two strings are compared

by optimally extracting sets of substrings and placing

them into correspondence. These could, for

example, represent biological "motifs" that are shared

between two genetic sequences, but that appear in an

unknown order. Depending on whether the strings

must be matched in their entireties and/or whether

overlap is forbidden between the substrings, we showed

that the problem is either NP-complete or solvable in

polynomial time using our algorithms. (A paper

describing this work can be found

here.)

Cross-domain approximate string matching, work I did

with Gordon Wilfong at Bell Labs, extends traditional

string matching in another way, by allowing strings to

be specified compared under fundamentally different

edit models that possess their own distinct alphabets

and cost functions, which we call domains.

Transcription rules are used to map the strings from

one domain into another. The genetic code is one such

transcription process, mapping from triples of

nucleotides (codons) to the amino acids that make up

proteins. Given two RNA sequences, one might

naturally wonder how similar the proteins they code

for are. Taking this one step further, it is

easy to imagine each of the RNA sequences first

undergoing pre-processing to correct for possible

"noise" effects that may have occurred for any of a

number of reasons, biological or otherwise (e.g.,

errors in reading the sequencing gels). Our work

formulates this computation as a unified optimization

problem and solves it using a new dynamic programming

algorithm. (A paper describing this work can be

found

here.)

Gordon and I have also recently started studying an

easy-to-compute measure for quantifying the similarity

between two arbitrary graphs, which we call graph

probing distance. We have proven that this

distance yields a lower bound on the true edit

distance between any two graphs, and have conducted

experiments that suggest it provides a reliable

approximation to graph edit distance. Moreover,

our technique is very fast; graphs with tens or

hundreds of thousands of vertices can be compared in

mere seconds. Since certain problems in

bioinformatics involve comparing large graphs, it

seems quite possible that our results could be applied

there.

Security Applications Involving Speech

With the current broad-based interest in security,

speech is becoming a popular biometric. Within

the past year I have initiated two collaborations on

unconventional uses for speech.

The first of these involves algorithmic techniques for

distinguishing between humans and machines, a problem

sometimes called the "Reverse Turing Test" (RTT) or

"Human Interactive Proof" (HIP). The growth of

the Internet as a medium for distributing valuable

content has made it an attractive target for hackers

who write malicious programs ("bots") that attempt to

exploit online services. As a result, service

providers have begun instituting automatic methods for

telling whether the entities attempting to access

their websites are humans or machines. Current

RTT's are predominately graphical. Realizing

that speech-based services are proliferating and that

sensitive applications such as bank-by-phone could

someday come under attack by bots built to navigate

spoken language interfaces, I have adapted the notion

of an RTT to the speech domain working with Chilin

Shih (now at University of Illinois, Urbana) and Greg

Kochanski (now at Oxford). (A paper describing

this work can be found

here.)

In another security-related application, I have begun

working with Fabian Monrose (now at Johns Hopkins),

Mike Reiter (now at Carnegie Mellon), Peter Li (now at

Li Creative Technologies), and Susanne Wetzel (now at

Stevens Institute of Technology) on a concept for

generating cryptographically secure keys from

speech. There are two basic methodologies for

attacking such a system. The standard approach

is to attempt to reconstruct the key, perhaps with the

help of cryptanalysis techniques. A more novel

line of attack is to try to synthesize the passphrase

based on surreptitious audio recordings of the user

speaking other material. Recognizing this fact,

I have been attempting to characterize the potential

effectiveness of such attacks. Doing so will

help us achieve a better understanding of the security

of the proposed method and develop ways of making it

stronger. (A paper describing this work can be

found

here.)

Document Analysis &

Digital Libraries

My research in document

analysis relates to making intelligent use of the

information that is contained in paper and electronic

documents. I am interested in developing

techniques that are robust in the presence of errors

that invariably arise during recognition processes

(both low- and high-level), with a variety of

potential end-user applications including retrieval,

summarization, data mining, transcoding into speech,

etc.

Tables, for example, are an

important means for communicating structured

data. Well-behaved tables can be viewed as

relations in the database sense of the term, but most

often the real world is not this accommodating.

With colleagues Jianying Hu (now at IBM Yorktown

Heights), Ram Kashi (now at Avaya Research), and

Gordon Wilfong, I have studied ways of locating and

extracting tabular information so that it can be

reformulated for other uses (e.g., for querying via a

spoken language interface).

There is also an intriguing

connection between this area and my interest in

bioinformatics, as researchers have begun to consider

ways of integrating the information contained in many

disparate written sources (journal articles, database

annotations, MEDLINE entries, etc.) that all make

mention of, say, the same gene.

Performance evaluation has

been another focus of my work in recent years, as has

the fundamental nature of ground-truth, the reference

by which recognition results are judged. In a

recent paper, George Nagy (at Rensselaer Polytechnic

Institute) and I proposed that user bias, context, and

data-entry tools can play significant roles in

determining truth, and that allowances should be made

for more than one admissible ground-truth. (A

paper describing this work can be found here.)

The World Wide Web

The World Wide Web is, of

course, the largest distributed collection

of documents in the history of civilization, and has

given rise to a diverse set of new problems. I

have looked at three in particular. The first

involves the extraction of text embedded in color GIF

and JPEG images, information which is overlooked by

current commercial search engines. Indeed,

Jiangying Zhou (now at Summus Ltd.) and I authored the

first papers on this subject beginning in 1996.

Another topic of interest

to me is the comparison of the graph structures

induced by hyperlinked documents, an application of

the graph probing paradigm I developed with Gordon

Wilfong. Possible applications include

query-by-structure, information extraction, "wrapper"

maintenance, etc.

Very recently, I have begun

exploring a framework for the real-time adaptive

delivery of web images to resource-constrained devices

(e.g., PDA's on a wireless network) working with

Yunnan Wu, a current graduate student at

Princeton. Our approach integrates techniques

from image analysis, data compression, rate-distortion

optimization,

and user interaction, yielding a scaleable

representation that provides the best possible image

for a given screen size and network bandwidth ("bit

budget"). (A paper describing this work can be

found here.)